If you have ever asked a software development firm how much a project will cost and received the answer "it depends," you were not being managed badly. You were being told the truth. Software development cost estimation is genuinely complex, and the businesses that understand why come out of the process with more accurate budgets, better partner relationships, and fewer expensive surprises mid-project.

This guide explains how software development is actually costed, what drives those costs, how pricing models work, and what to look for in a partner who will give you an estimate you can trust.

Why Software Cost Estimation Is Difficult

No Two Projects Are the Same

Unlike buying a physical product with a fixed price, custom software development involves creating something that does not yet exist, to meet requirements that are often still being clarified, using technologies chosen to fit a specific context, in a timeline shaped by priorities that may shift. Every one of those variables affects cost, and none of them can be collapsed into a simple formula.

The consequence of underestimating this complexity is significant. According to the PMI Pulse of the Profession 2025 report, surveying nearly 3,000 project professionals globally, only 34% of projects are delivered on time and within budget, with the average cost overrun running at 27% per project. These are not outliers. They reflect what happens when estimation is treated as a formality rather than a discipline.

Good cost estimation is not guesswork. It is a structured process that works backward from a clear understanding of the problem, the scope, and the constraints. Organisations that approach it that way get budgets they can rely on. Those that skip the work end up managing change requests, timeline extensions, and eroding confidence in the project long before it delivers.

What Actually Drives Software Development Costs

Scope and Complexity

The most significant driver of cost is the scope of what is being built. A lightweight internal tool with a handful of features and a single user role will cost a fraction of what a multi-tenant enterprise platform with complex business logic, role-based permissions, and a sophisticated data model requires. The gap between those two ends of the spectrum is not incremental. It can be an order of magnitude. If you are still deciding whether to build or buy, our guide to custom software versus off-the-shelf solutions covers that decision in full.

Within scope, complexity compounds cost. Features that look similar on a requirements list can vary enormously in the engineering effort required to build them reliably. A basic data export takes hours to build. A real-time, configurable reporting engine with aggregation logic across multiple data sources takes weeks. Understanding that distinction before a project starts is what separates accurate estimates from aspirational ones.

Integrations

Modern software rarely operates in isolation. The number and complexity of integrations with external systems, whether CRMs, ERPs, payment gateways, third-party APIs, or legacy internal platforms, is one of the most consistently underestimated cost drivers in software projects.

Each integration introduces its own documentation requirements, authentication complexity, error handling, and testing overhead. A single well-documented modern API might add a few days of effort. An integration with a poorly documented legacy system, or one that requires data transformation across incompatible schemas, can add weeks. When a project requires five or six integrations, the cumulative effect on both cost and timeline is substantial.

Data and AI Components

Projects that include AI and data engineering components carry additional cost drivers that pure application development does not. Building data pipelines that ingest, transform, and serve data reliably requires specialist engineering. Developing or integrating AI and machine learning models requires a different skill set again, along with the infrastructure to train, test, and monitor those models in production.

The scope of AI work is also harder to estimate upfront than traditional development, because the performance of a model depends on factors, including data quality, volume, and the complexity of the problem, that are often only fully understood once work has begun. Experienced teams factor this uncertainty into their estimates. Teams without that experience frequently underestimate it.

User Experience Design

The complexity of a product's design layer is another variable that is often underweighted in initial estimates. A single-role product with a linear workflow requires a different design investment than a platform serving five distinct user types, each with different needs, mental models, and workflows. Accessibility requirements, responsive design across device types, and the need for iterative user research all add to the design effort and therefore to the overall cost.

Security and Compliance

Projects handling personal data, financial information, or operating in regulated industries carry additional requirements that must be built into the architecture from the outset. GDPR compliance, data residency requirements, role-based access controls, audit logging, and penetration testing are structural requirements that affect how the system is designed, not just what features it includes. Leaving these until after the core system is built is one of the more reliably expensive mistakes in software delivery.

Understanding Effort-Based Estimation

What Man Days Actually Mean

Software projects are typically estimated in man days, also referred to as person days, which represent the unit of effort required to complete a given piece of work. A simple project might require five to ten days of effort. A complete enterprise platform might require three hundred to five hundred days or more across multiple disciplines.

The critical point is that man days reflect total effort, not just coding time. A well-scoped estimate accounts for the full delivery team and all the activities required to move from requirement to working software. This includes frontend and backend development, UX design and iteration, technical architecture decisions, code review and quality assurance, testing across environments, project management and stakeholder communication, documentation, deployment, and post-launch support.

When an estimate accounts only for development time and ignores the surrounding disciplines, it will be wrong, and the shortfall will become visible at exactly the point in the project where it is most disruptive.

Translating Effort Into Cost

Man days multiplied by the daily rate of the team members involved produces the labour cost of the project. Day rates vary significantly based on team seniority, specialist skills, geography, and engagement model. A senior architect working on a complex data integration commands a different rate than a mid-level frontend developer building standard UI components, and a well-structured project will use each appropriately.

Fixed Price vs Time and Materials

Fixed Price

A fixed price model sets a defined scope and delivers it for a pre-agreed cost. The appeal is predictability: the organisation knows what it will spend and what it will receive. The limitations are significant in practice. Fixed price contracts typically include risk padding to protect the supplier against scope uncertainty. They offer limited flexibility to change requirements as the project progresses, and change requests, which are almost inevitable in any meaningful software project, are managed through a formal process that adds both cost and friction.

Fixed price works best when the scope is genuinely well understood before work begins, when requirements are unlikely to evolve, and when the risk of change is low. For most custom software projects of any meaningful complexity, those conditions are not all met simultaneously.

Time and Materials

A time and materials model charges for actual effort spent, billed against agreed rates and tracked transparently. The organisation pays for what the team delivers, and requirements can evolve without triggering a formal change process. Priorities can be adjusted, and the project can respond to what is learned during development.

The trade-off is that upfront cost certainty is lower. Time and materials requires a higher degree of trust and collaboration, and organisations without robust project governance can find costs drifting. The answer is not to avoid the model, but to ensure that project reporting, sprint reviews, and budget tracking are built into the engagement structure from the start.

The Hybrid Approach Most Successful Projects Use

The majority of well-run modern software projects use a hybrid model. Discovery, the initial phase in which requirements are defined, architecture is designed, and effort is estimated with precision, is conducted on a fixed basis. Development and iteration then proceed on a time and materials basis, with the detail produced in discovery providing the planning confidence that time and materials alone cannot offer.

This approach combines the transparency and flexibility of time and materials with the upfront structure that organisations need to commit budgets and set expectations internally. It also ensures that the estimate underpinning the project is based on actual understanding of the requirements rather than assumptions made before any discovery work has been done.

The Most Important Questions to Ask Any Development Partner

Transparency in cost estimation is a signal of how a development partner manages delivery. The questions worth asking before committing to any engagement include the following.

How do you estimate effort, and what is that estimate based on? A credible answer describes a process: discovery, requirements analysis, architecture review, and estimation.

What assumptions, and risks, are built into this estimate? Every estimate makes assumptions. A trustworthy partner will state them explicitly rather than leaving them implicit.

What happens to the estimate if requirements change? The answer should describe a clear, fair process, not a defensive one.

What is included in the day rate, and what is not? Project management, testing, and deployment are costs. If they are not in the estimate, they will appear later.

How do you ensure delivery stays within the agreed budget and timeline? The answer should describe delivery milestones, reporting, and governance, not just good intentions.

What does success look like at the end of this project, and how will you measure it? Partners who think about outcomes rather than just outputs are the ones worth working with.

Evaluating the "New Low" Price

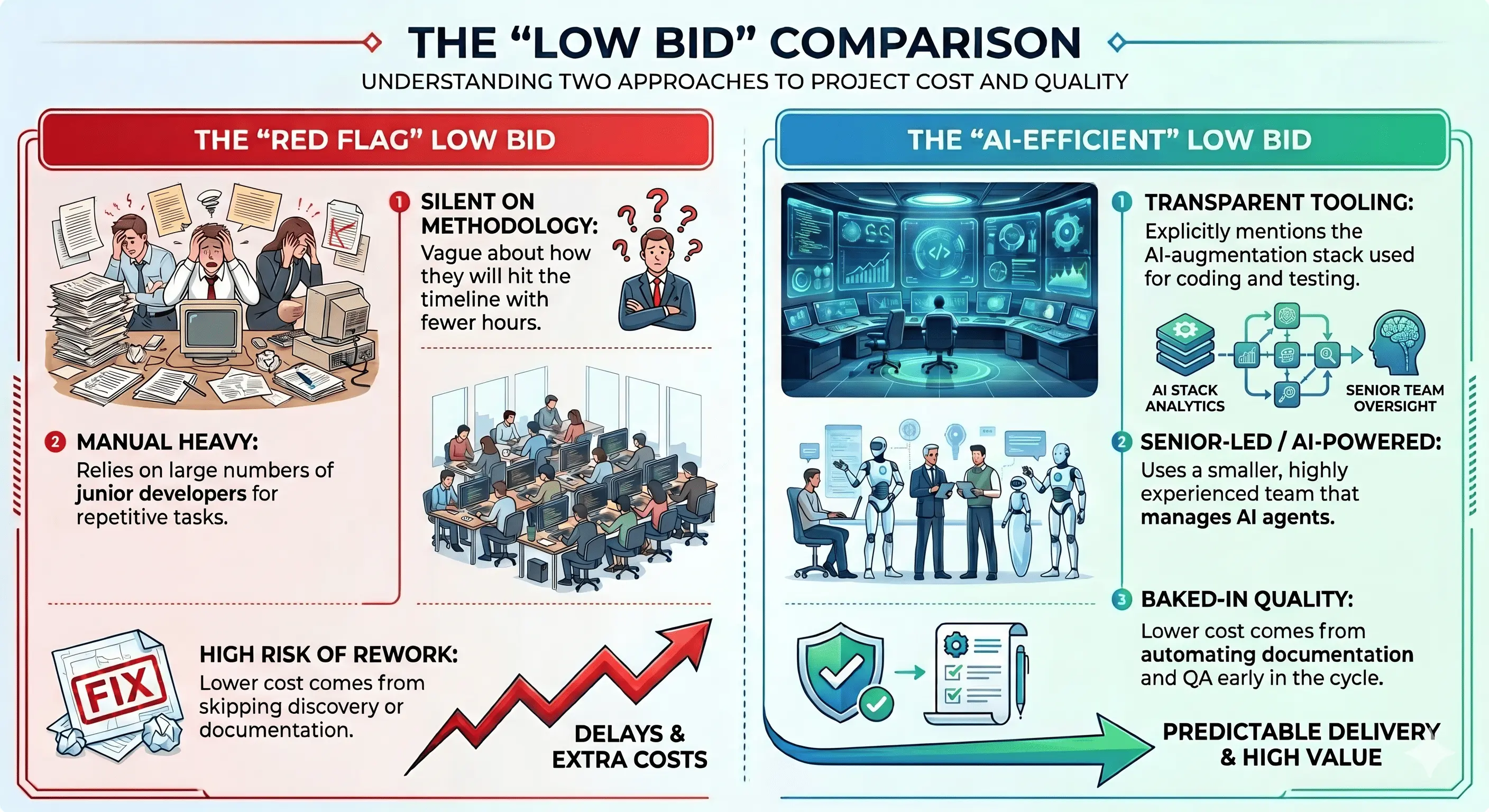

In 2026, a significantly lower estimate requires a different kind of due diligence. It is no longer enough to assume a low price means high risk; instead, you must determine if that price is driven by technological leverage or traditional under-scoping.

The "AI Efficiency" Dividend

A partner leveraging an AI-augmented development stack can often deliver high-quality code at a fraction of the traditional cost. When reviewing a competitive bid, look for evidence of:

- Automated Testing & QA: Are they using AI to generate edge-case tests that would take a human days to write?

- Code Generation & Scaffolding: Is the team using advanced LLMs to handle boilerplate code, allowing senior architects to focus exclusively on high-value business logic?

- Accelerated Design-to-Code: Are they using tools that convert UX wireframes directly into clean frontend components?

How to Tell the Difference

To distinguish between a "cheap" bid (risky) and an "efficient" bid (modern), your due diligence questions must evolve. Instead of just asking why it’s cheaper, ask how the lower cost is achieved:

How to Get an Accurate Estimate

Start With a Discovery Phase

The single most effective investment an organisation can make before committing to a full software build is a structured discovery phase. This is a time-limited, scoped engagement in which the custom software development partner works with the organisation to define requirements precisely, assess technical complexity, identify integration requirements, and produce a well-grounded estimate for the full project.

Discovery does not prevent surprises entirely, but it dramatically reduces their frequency and magnitude. It also provides a foundation for the project governance that keeps delivery on track once full development begins.

Define Business Goals Before Features

Software requirements that start with business outcomes rather than feature lists produce better estimates and better software. Understanding what the system needs to achieve, and for whom, allows the development team to ask better questions, identify where complexity is being underestimated, and propose architectural approaches that serve the organisation's long-term needs rather than just its immediate requests.

Prioritise With MVP Thinking

Not everything in a requirements list needs to be in the first release. Identifying the minimum viable product, the smallest version of the system that delivers real value, reduces the cost and risk of the initial build and creates the opportunity to learn from real use before investing in additional features. Experienced partners will push organisations toward this thinking. It is a sign of commercial maturity, not a limitation of ambition.

How Experienced Partners Improve Cost Outcomes

The difference between working with an experienced data consulting and development partner and one that is less experienced is not just the quality of what gets built. It is the total cost of getting there.

Experienced teams identify architectural risks early, before they become expensive rework. They optimise the system design for maintainability and scalability, reducing the long-term cost of ownership rather than just the short-term build cost. They bring commercial realism to scope discussions, pushing back on requirements that add complexity without proportionate value. And they align technical decisions with business objectives in ways that ensure the system serves the organisation's needs as those needs evolve.

The Virtual Forge works with organisations to scope, estimate, and deliver custom software with the transparency and commercial discipline that produces projects clients are still proud of years after launch, not just at go-live.

Moving Forward

Software development cost estimation is not about arriving at a number. It is about understanding the problem, defining the scope with rigour, choosing the delivery model that fits the project's nature, and working with a partner whose estimate reflects genuine understanding rather than competitive pricing strategy.

Organisations that approach it this way avoid the costly mistakes that come from underscoped projects and misaligned expectations. They build better systems, manage budgets more accurately, and achieve stronger return on their investment.

If you are planning a software project and want a clear, grounded estimate built on real discovery work, our team is ready to start that conversation. Get in touch with The Virtual Forge.

Get In Touch

Have a project in mind? No need to be shy, drop us a note and tell us how we can help realise your vision.

.webp)